Free Solution Selection Matrix Template for RFPs (Excel)

.jpg)

You may spend days reviewing an RFP, coordinating with subject matter experts, and writing detailed responses, only to learn later that the buyer selected another vendor. In many cases, the loss is not due to product gaps. The proposal simply did not address the criteria the buyer used to evaluate vendors.

When you submit an RFP response, your proposal is often reviewed through a structured scoring method. Procurement teams commonly use tools such as a solution selection matrix to compare vendors on factors such as technical capability, cost, delivery timeline, and risk. If your answers do not clearly map to those criteria, reviewers may struggle to score your solution accurately.

In this guide, we’ll explore what a solution selection matrix is, how buyers use it to evaluate vendor proposals, what criteria typically appear in these matrices, and how you can structure your RFP responses around those criteria to improve your chances of being shortlisted.

Solution Selection Matrix Template (Excel) for RFP Vendor Evaluation

A solution selection matrix is a structured scoring framework buyers use to compare vendor proposals during the RFP review process. Each proposal is evaluated using the same criteria so procurement teams, technical reviewers, and business stakeholders can assess vendors using a consistent method.

Many procurement teams apply percentage-based weighting, where all criteria weights add up to 100%. This keeps the final score simple to interpret and allows executives to see which vendor performed best across the evaluation categories.

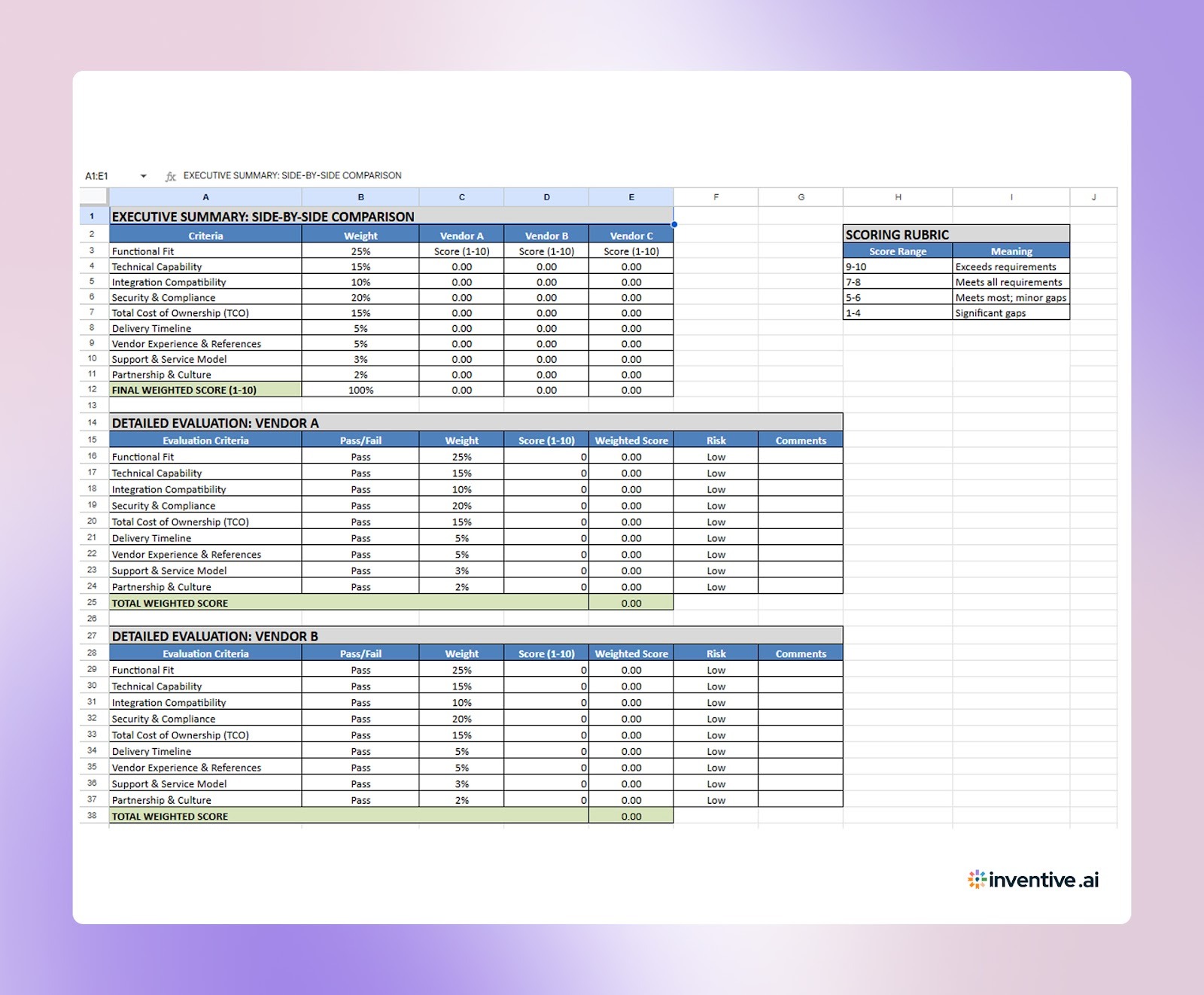

Buyers often use a solution selection matrix like this to score vendor responses and compare proposals during the RFP evaluation process:

Solution Selection Matrix (Evaluation Template)

Total Score represents the final vendor rating once all weighted scores are added together. This number is often used during procurement reviews when leadership compares shortlisted vendors.

How Vendors Can Use Solution Selection Matrix Template Excel Before Submitting an RFP Response?

Before submitting the final proposal, teams can run a short internal review using the matrix criteria. This helps highlight gaps, missing evidence, or sections that may receive lower scores during evaluation.

The following steps show how vendors can apply the matrix during proposal preparation:

Score Your Own Proposal Using Buyer Criteria

Start by reviewing the proposal using the same evaluation categories that typically appear in RFP scoring sheets. This quick scoring exercise provides an early view of how reviewers might assess the response.

Scores that fall in the middle range often signal areas where the response may need clearer explanation or stronger supporting information.

Confirm All Mandatory Requirements Are Addressed

Many RFPs include non-negotiable requirements. If a proposal fails one of these items, it may be removed from consideration regardless of other scores.

Before submission, vendors can review mandatory requirements using a simple checklist.

This quick review helps confirm that required items are clearly addressed in the proposal.

Check Whether Key Claims Are Supported

Buyers often score proposals based on the clarity and credibility of the information provided. Strong claims without supporting details may receive lower scores during evaluation.

During the internal review, teams can verify that important statements are supported with evidence.

Common supporting elements include:

- Product architecture diagrams

- Security documentation or certifications

- Customer references or case studies

- Detailed delivery plans and milestones

- Clear pricing explanations

Including this information helps reviewers understand how the solution meets the requirement.

Review Areas Where Buyers Often Compare Vendors

Buyers rarely review proposals in isolation. Instead, they compare vendors across several core areas before shortlisting finalists.

Proposal teams can review how their response performs in those comparison areas.

This internal comparison helps teams anticipate how buyers may view differences between vendors during the evaluation stage.

Make Final Improvements Before Submission

Once the internal review is complete, teams can revise sections that appear weaker or unclear. Small updates at this stage often improve how the proposal is understood during evaluation.

Common improvements include:

- Expanding technical explanations

- Adding diagrams or architecture details

- Clarifying delivery schedules

- Strengthening customer references

- Providing clearer cost breakdowns

Running this review before submission helps vendors present a proposal that maps closely to the criteria buyers often use when scoring vendor solutions.

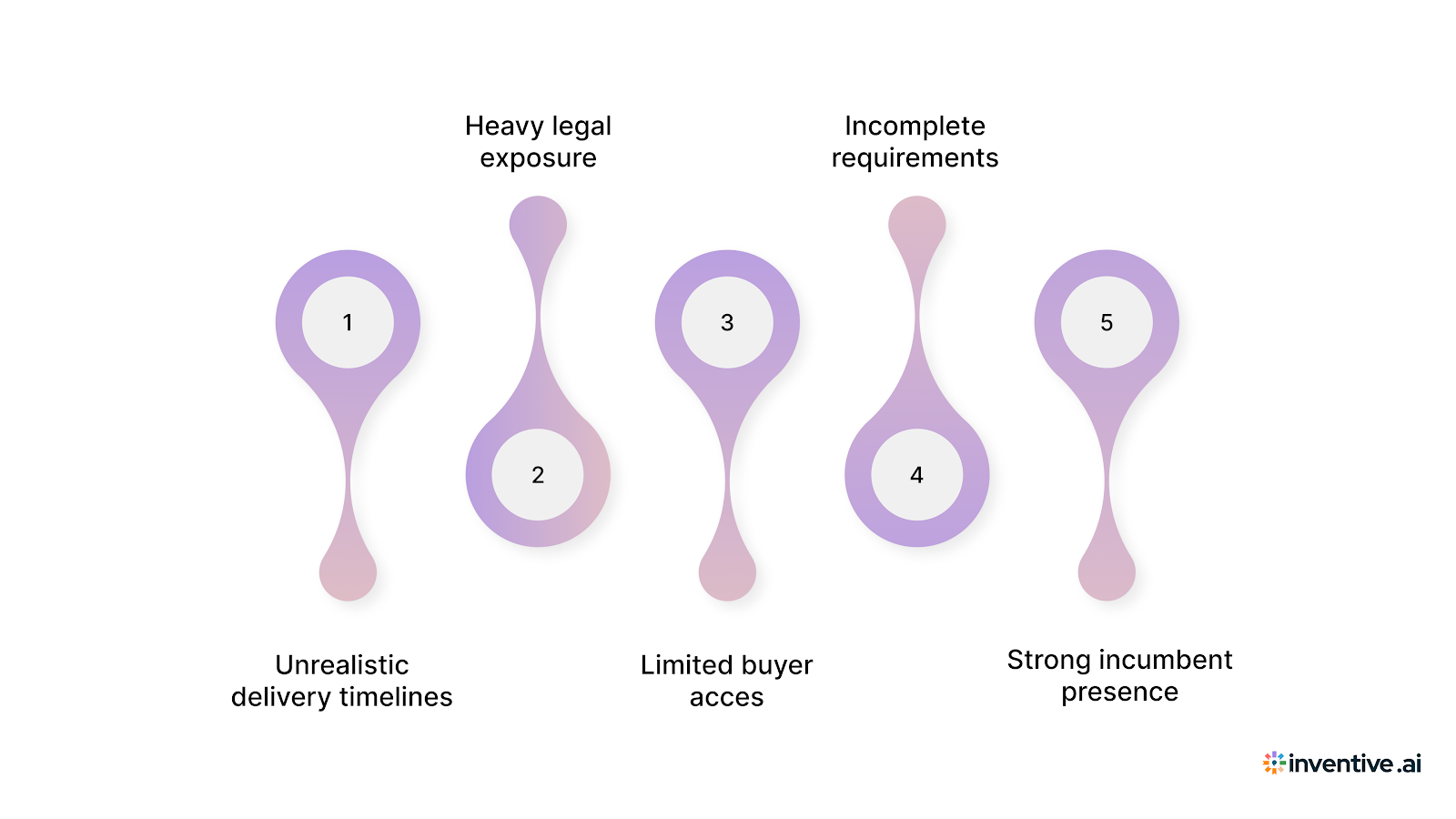

Common RFP Red Flags Vendors Should Spot Early

Even when a deal looks attractive on paper, certain signals can reveal hidden risk. Adding a risk check to your matrix helps the team pause and review factors that could affect delivery, profitability, or deal success.

Watch for warning signs like the ones below when reviewing an RFP.

- Unrealistic delivery timelines: If the buyer expects delivery faster than your team can reasonably provide, the project may create internal pressure and missed commitments.

- Heavy legal exposure: Some contracts include penalties, unlimited liability, or strict compliance terms that may create financial risk.

- Limited buyer access: When vendors cannot speak with the evaluation team or stakeholders, it becomes harder to clarify requirements or position the solution.

- Incomplete requirements: RFPs that lack clear details about the project scope can lead to incorrect assumptions and scope changes later.

- Strong incumbent presence: If a current vendor has a long-standing relationship with the buyer, the RFP may only serve as a formal procurement step.

When teams review these signals early, they can prepare stronger responses and address potential concerns before submitting the proposal.

Benefits of Using a Solution Selection Matrix Template Excel

Without a structured review method, proposal teams often rely on instinct when preparing RFP responses. A scoring framework based on common buyer evaluation criteria gives sales, proposal, and solution teams a consistent way to review proposals before submission.

Here are several advantages of applying a structured proposal review process:

- Clear proposal focus: Teams review whether the response clearly addresses technical capability, security, pricing, and delivery expectations.

- Stronger proposal quality: Internal scoring highlights sections that may need clearer explanations or stronger supporting details.

- Fewer last-minute revisions: Teams identify missing details earlier during the proposal review process.

- Better internal coordination: Sales, solutions, and proposal teams review the same criteria when preparing the response.

- Better win and loss analysis: Teams can compare internal proposal scores with buyer feedback after the RFP process.

Even after reviewing the proposal internally, teams still face another challenge. Large RFPs require accurate answers, current information, and input from multiple subject matter experts. Handling this work manually can slow proposal teams down, which is why many organizations are turning to AI and automation tools to assist with RFP responses.

How Inventive AI Helps Proposal Teams Respond to RFPs Faster?

Large RFPs require accurate answers, current information, and input from multiple subject matter experts. This process often slows teams down and increases the risk of inconsistent responses.

Tools like Inventive AI help proposal and sales teams manage these challenges more effectively.

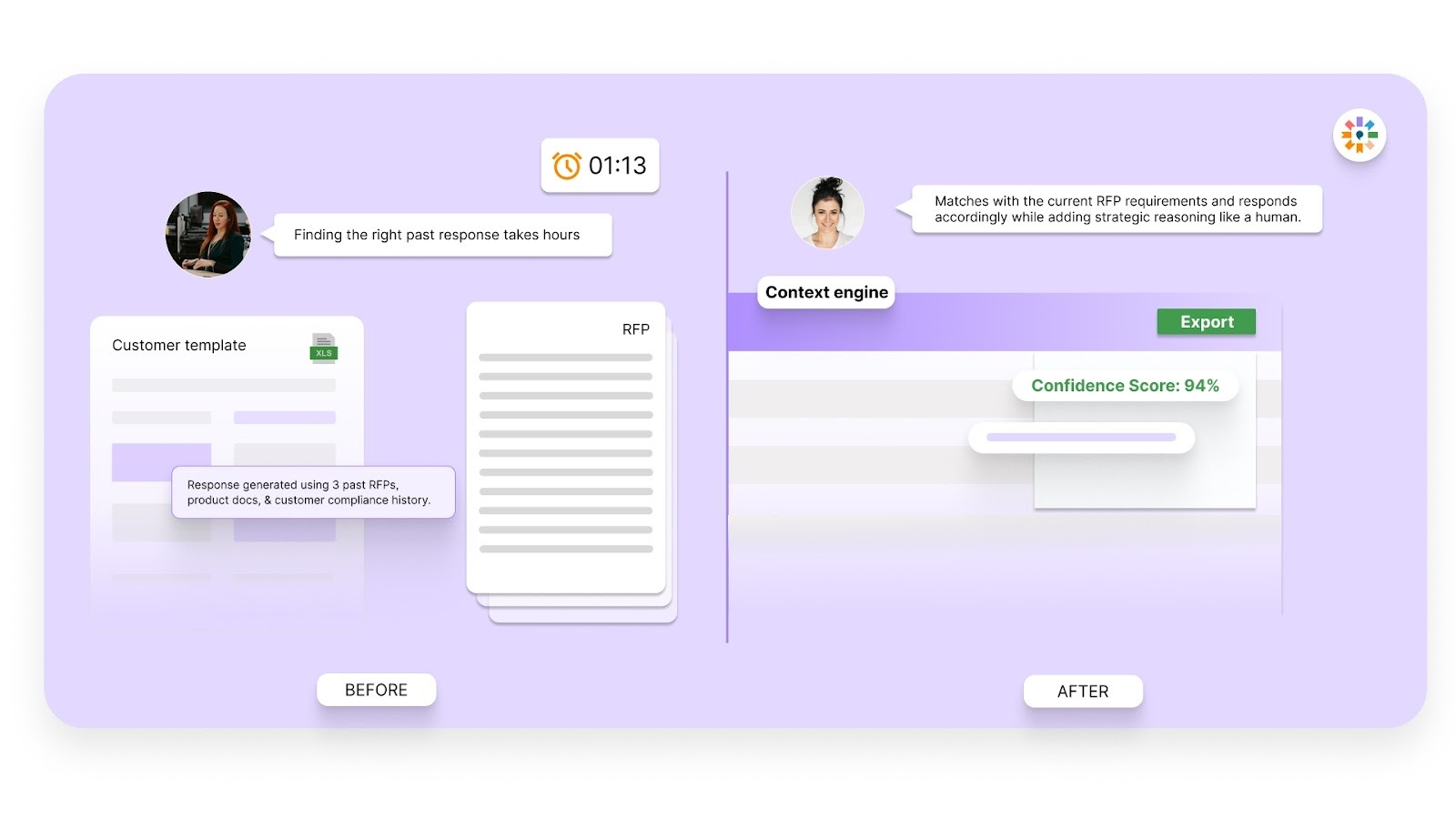

Context Engine:

Many AI tools rely on simple lookups from stored documents, which often produce generic answers. Inventive AI applies layered reasoning that reads the full RFP context before generating responses. This approach produces answers grounded in your knowledge sources, making the responses read as if they came directly from an internal expert.

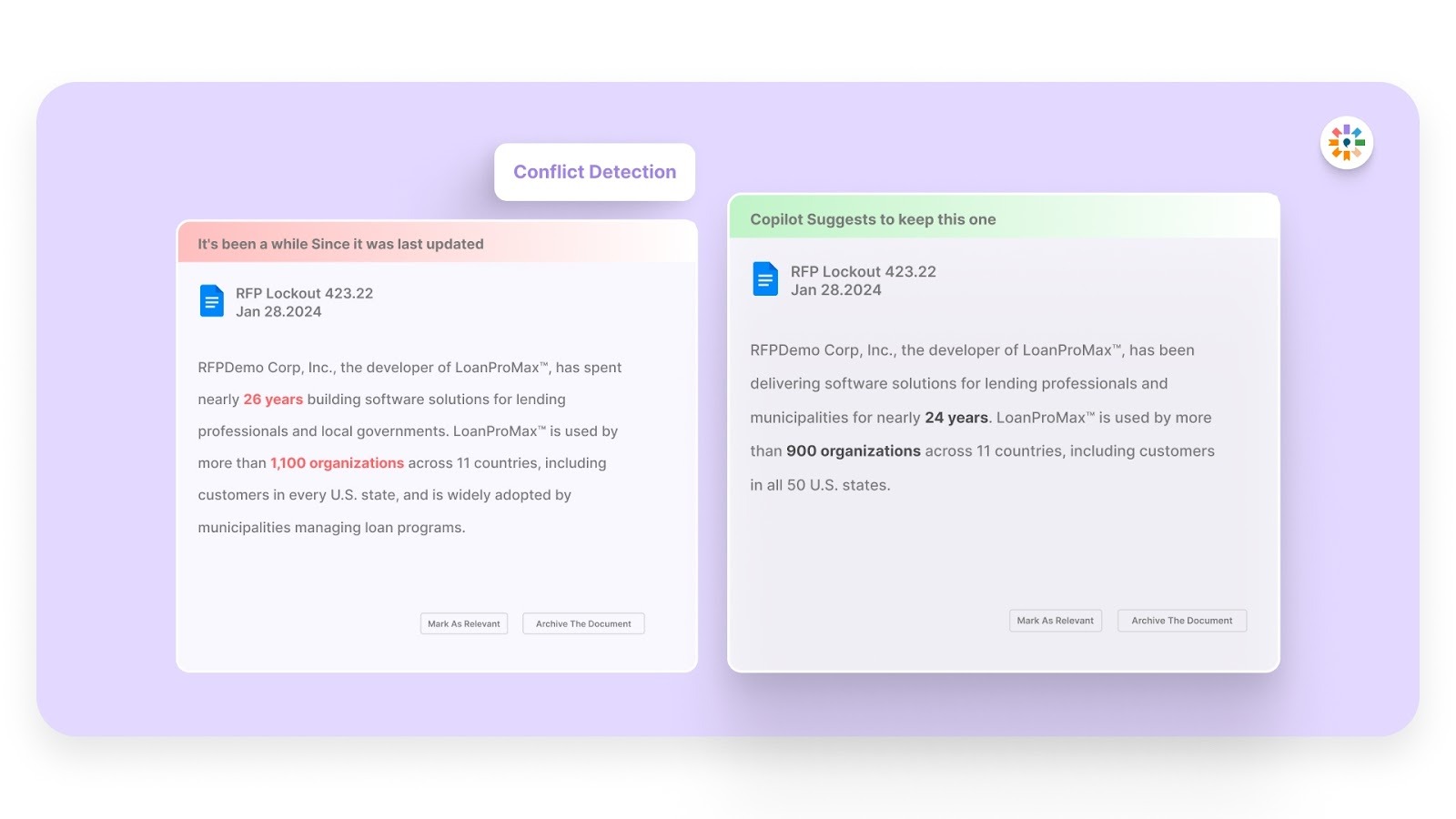

Conflict Detection:

Large proposal libraries often contain answers written by different teams over time. This can lead to conflicting statements appearing in the same response. Inventive AI flags these conflicts as soon as they appear so teams can correct them before submitting the proposal.

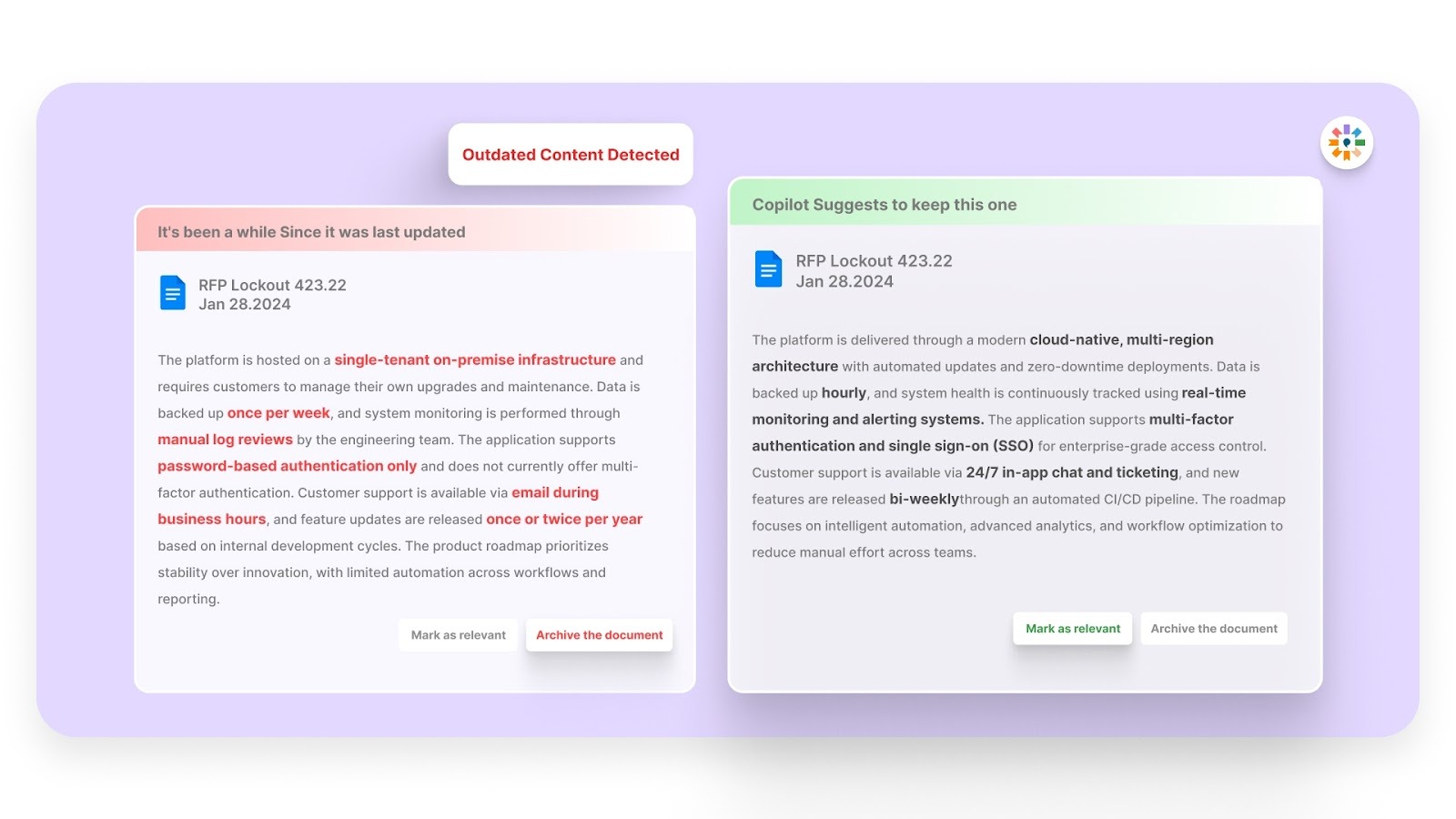

Outdated Content Detection

Many organizations maintain large repositories of past answers that may no longer reflect current product features or compliance requirements. Inventive AI identifies outdated or inconsistent content inside these knowledge sources, helping teams avoid using incorrect information in proposals.

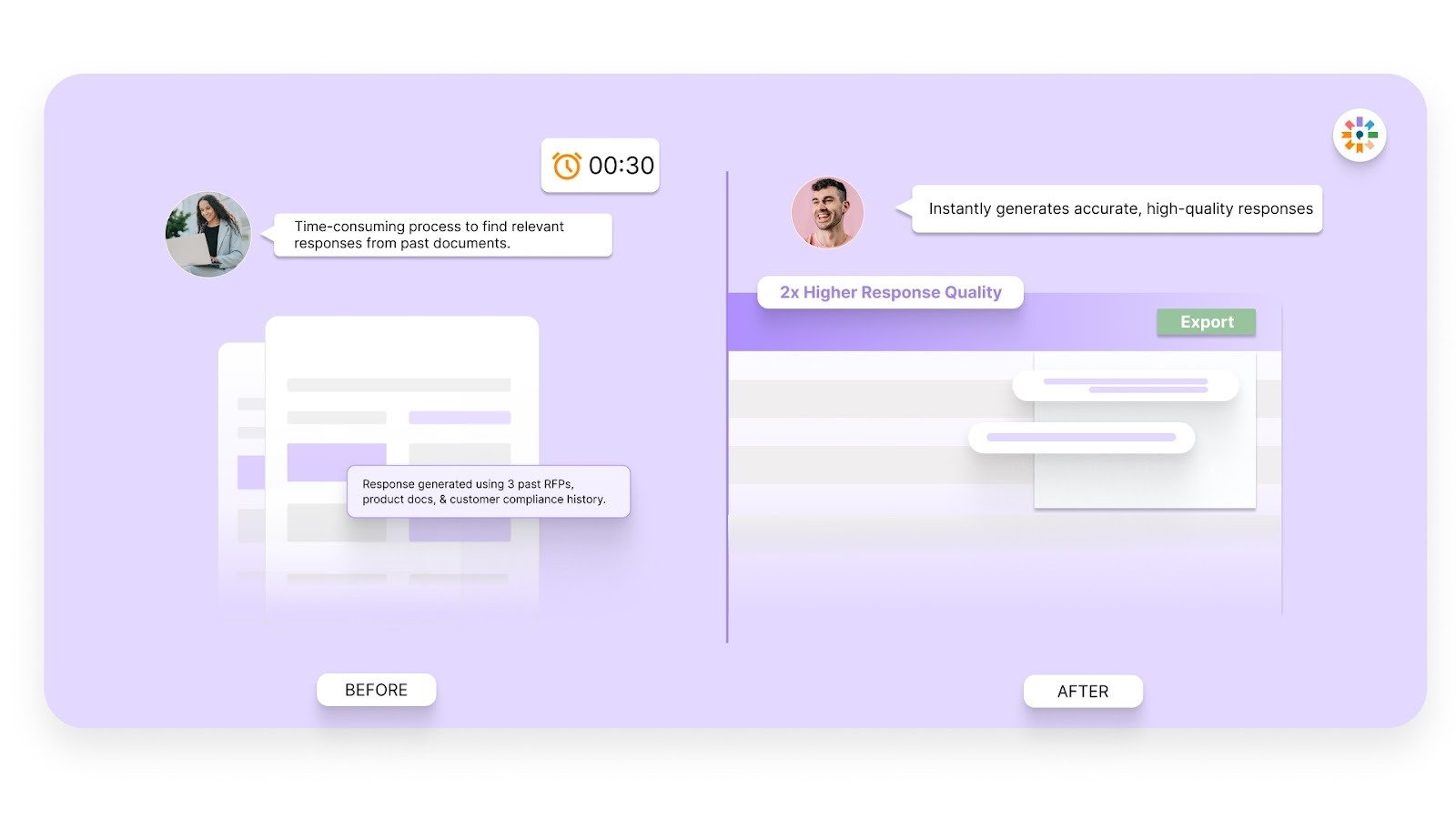

2X Higher Quality Responses

Inventive AI uses multiple AI agents that analyze the purpose behind each RFP question. The system then generates responses that focus on clarity, completeness, and accuracy. This helps proposal teams produce responses that better address buyer expectations across the entire RFP.

Simple and Easy to Use Interface

Adopting new software can be difficult for teams that already manage tight proposal deadlines. Inventive AI focuses on a clear and simple interface so teams can start using the platform quickly. The platform has a full adoption rate across its current customer base and is ranked as the easiest to use RFP software on G2.

Narrative-Style Proposals

While most tools only handle short Q&A, Inventive AI generates complete, long-form documents like executive summaries and one-pagers. This allows you to build a cohesive, persuasive story for your entire proposal instead of just filling out a spreadsheet.

If your team wants to reduce response time, improve answer quality, and manage proposal knowledge in one place, you can book a demo with Inventive AI to see how the platform supports faster RFP responses.

FAQs

1. How do you decline an RFP without harming the buyer relationship?

You can decline professionally by explaining the reason clearly, such as timeline conflicts or missing capabilities. A short response thanking the buyer for the opportunity keeps the relationship positive. This approach shows respect for the buyer’s process and keeps the door open for future opportunities that match your offering.

2. Who should assign the scores in a solution selection matrix?

Scoring works best when several roles contribute to the evaluation. The account executive, solution architect, and proposal manager can review the opportunity together. This discussion balances sales enthusiasm with technical and delivery considerations before the proposal timeline begins.

3. How often should teams review the evaluation criteria in the matrix?

Teams should review the scoring criteria at regular intervals, such as every six months or after a major lost deal. This helps teams adjust weighting when market conditions, product capabilities, or buyer expectations change. Small updates keep the scoring model relevant for future opportunities.

4. Can a proposal still succeed if it scores lower in some criteria?

A low-scoring opportunity may still move forward when leadership sees strategic value in the deal. Examples include entering a new industry segment or working with a well-known brand. In these cases, leadership should acknowledge the additional risk and commit the required internal support.

5. How does the matrix support win and loss analysis after the bid?

Comparing the original evaluation score with the final outcome helps teams identify patterns. If certain criteria repeatedly appear in lost deals, the team gains insight into areas that require improvement. Over time, this feedback improves how future opportunities are evaluated.

90% Faster RFPs. 50% More Wins. Watch a 2-Minute Demo.

Knowing that complex B2B software often gets lost in jargon, Hardi focuses on translating the technical power of Inventive AI into clear, human stories. As a Sr. Content Writer, she turns intricate RFP workflows into practical guides, believing that the best content educates first and earns trust by helping real buyers solve real problems.

After witnessing the gap between generic AI models and the high precision required for business proposals, Gaurav co-founded Inventive AI to bring true intelligence to the RFP process. An IIT Roorkee graduate with deep expertise in building Large Language Models (LLMs), he focuses on ensuring product teams spend less time on repetitive technical questionnaires and more time on innovation.